Next week, Barbara Prainsack and I will be holding a symposion on Agentic AI in Hybrid Societies at the Austrian Academy of Sciences (Democracy in Digital Societies; DEMGES). We’ve a fabulous line-up including young speakers from the Studienstiftung – who’ll bring in their perspectives on AI and societal challenges; a voice often overheard in public debate. Topics range from technical foundations of agentic AI, Europe’s digital sovereignty, global health governance, to autonomous warfare. The day closes with a future-oriented panel discussion. We’re looking forward to seeing you there! The full program can be found here.

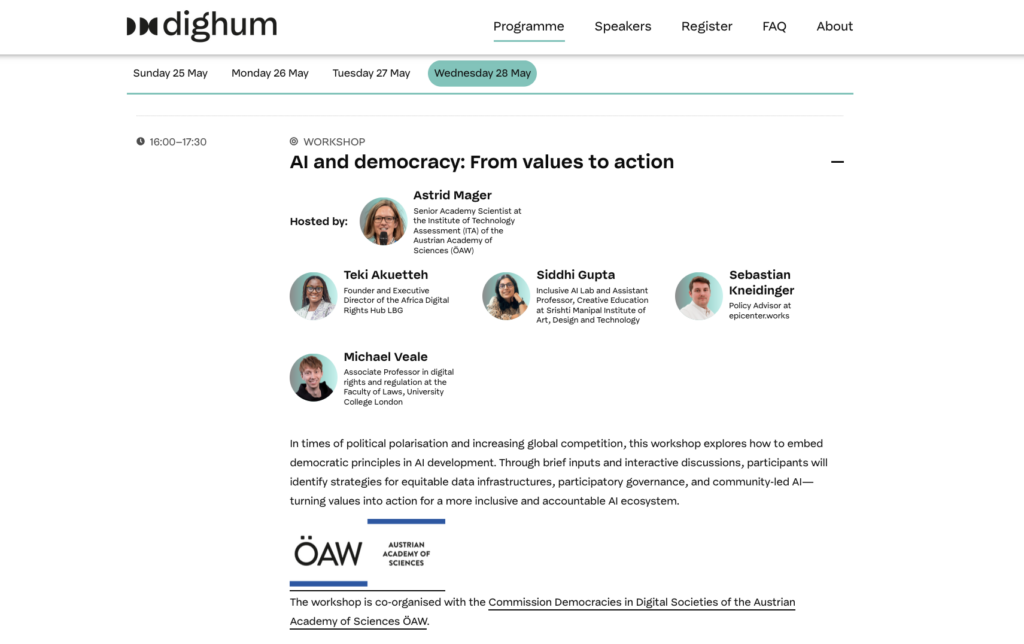

From 9-12 June, I’m co-organizing a massive AI Festival in Utrecht/ Amsterdam; together with Payal Arora from the Inclusive AI Lab. The festival comprises hands-on workshops, field trips, creative sessions, and a PhD-led day. Together with Mirko Tobias Schäfer from the Data School (Utrecht Univ.), I’ll be leading a workshop on Situated Ethics: From values to action – asking how we could move beyond abstract ethics principles by learning from real-world experiences and expertise; particularly including policy makers, industry, and civil society from the Global South. On the next day, we’ll be moving behind the scenes of AI by visiting the Data Protection Authority and the Ministry of Labor and Social Affairs in The Hague. I’m really looking forward to peeking into the mundane practices of AI use and their – often unintended – side-effects ranging from data bias and discrimination to sociotechnical transformations of work routines – again asking how to practically respond to these developments on the government side; e.g. by creating “ethics officers”. The overall topic of the festival is Reclaiming Techno Optimism. (full program)

Finally, together with Katja Mayer, I’m currently putting together two sessions for the Digital Humanism Conference in Vienna (24-26 June 2026). One of them will feature Payal Arora and Gilberto Vieira from Rio de Janeiro (previously data_labe) – discussing how to move From Pessimism to Promise (Payal’s new book) and what we can learn from Global South perspectives and practices. The program will be out soon!